AI in e-learning: where does it make sense in 2025 and where does it not yet?

Today, artificial intelligence can save time, refine content, and personalize content in e-learning. However, it can also generate nonsense and pose legal risks if deployed without careful consideration. This article explains where AI can help immediately, where it is better to be cautious, and how to implement the first pilot so that it truly adds value.

Where AI already makes sense today

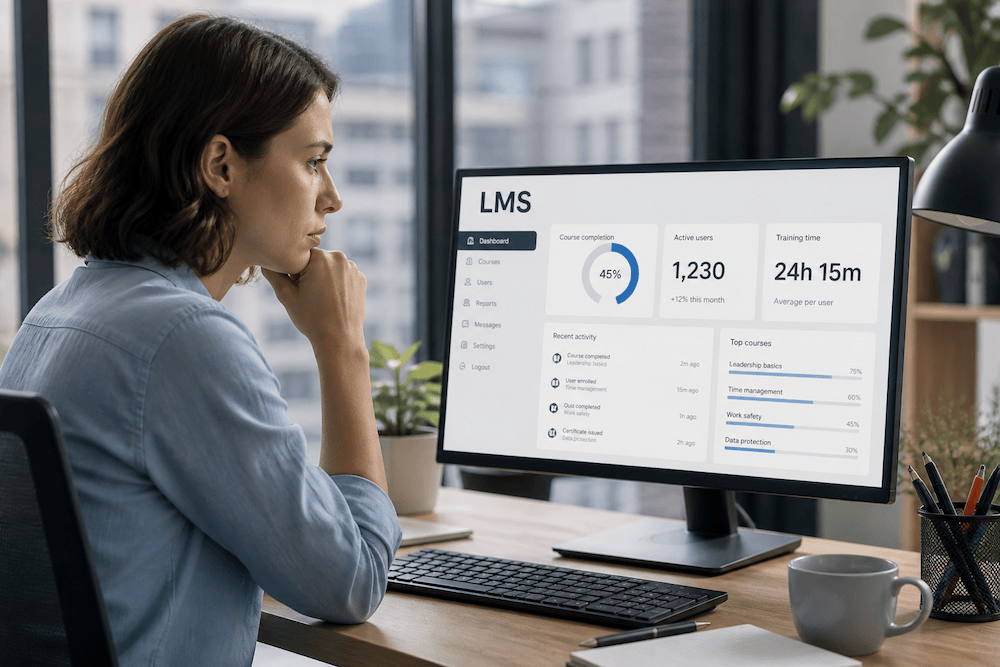

You will see the fastest benefits in tasks that are frequent, routine, and yet take up the most time for educational course creators and LMS administrators. Typical examples include preparing study syllabi, summarizing long texts into a more digestible form, and creating test questions.

AI can design the framework of a lesson, offer several formulations, and come up with distractors for tests; a human then checks the AI's output, standardizes the terminology, and fine-tunes the style and wording. In practice, this works as a quick draft that is easy to work with. This often saves tens of minutes on each module or part of the course. AI is also very practical for localization and translation. For videos, it helps recognize speech, create transcripts, and generate basic subtitles with timing. In larger libraries of courses or files, AI significantly improves searchability: instead of clicking through a complex structure, users can ask a natural question and find relevant content, even if it is named differently. Assisted learning directly within the education system also makes sense. Imagine a contextual "tutor" (advisor or assistant within your system) who draws only from your verified materials—methodologies, internal wikis, or the courses themselves—and always shows the source from which the answer comes. If unsure, they offer a link to a person or more accurate material. This combination of quick help and traceability reduces student frustration and helpdesk workload.

Finally, AI can enrich content recommendations. It is not necessary to promise "fully adaptive learning" right away; it is enough for the system to clearly explain why it is offering course X right now: it is based on your role, what you have already completed, and the skills that users in that position usually lack. Transparent recommendation logic increases trust and leads to greater willingness on the part of users to use the system.

Where to be cautious (or better yet, avoid) for now

The biggest mistake is to let AI autonomously generate or change content where you are responsible for the study content—typically compliance, occupational safety, or healthcare. AI can serve as a hint and "sparring partner" here, but the expert and lawyer must have the final say.

Caution is also warranted when it comes to automatic evaluation of open-ended answers. Without calibration on a sample, clear rubrics, and regular checks for impartiality, there is a risk of unfair assessment.

Be wary of chatbots that do not explain where they get their information from. If the system cannot cite sources and cannot refer to a physical lecturer or course guarantor, it quickly loses credibility.

How to think about AI when choosing an LMS

On our portal, you can use the option to filter LMSs by "AI support." However, keep in mind that each LMS or supplier may understand AI support differently within their solution: for one system, it may be smart search in courses and documents, for another, it may be assistance in content creation, and for a third solution, it may be an assistant for students or a helpdesk.

Therefore, it is useful to look at AI either as a set of specific features that address your real needs (faster course creation, better searchability, fewer support queries) and look for a solution that directly offers these functions, or take "AI support" as just a "nice bonus" that won't change anything significant in everyday operations but can earn you bonus points with end users.

When choosing an LMS, it is also worth considering the extent to which AI in the system is suitable for corporate use. Ideally, it should be able to work with your own materials (guidelines, internal wiki, courses) and at the same time it should be clear where it gets its answers from — so that it is easy to verify that they are not just assumptions. It is also helpful to know whether you can limit AI to selected user roles (for example, only for creators or only for students) or enable it only for certain parts of the system. Whether it will have access to overviews of tasks and actions performed by AI (for example, history of conversations with users, overview of recommended courses, etc.). These are details that are often overlooked when comparing systems, but in real-world operation, they determine whether AI will be a welcome feature in your LMS or not.